I think it was about 10 or 11 at night. I’d been practicing interviews for a couple of hours by that point, working up to beating a timer with increasingly difficult problems. It must have been my fifth time implementing DFS that night, but this time under the pressure of a clock. Easy recursion with familiar keystrokes. Out loud, to no one in particular, I walked through the approach as I went along and took time at the end to explain the trade-offs. And for the first time, I finished the problem in under 45 minutes. My arms shot up into the air.

This is the first time I’ve ever studied for interviews. It’s the first time I’ve ever had to, if I’m being completely honest. I had offers from friends to help, which felt familiar and reminded me of college, but we’re not in college anymore, and we all have busy lives. So while I took a couple of people up on the offer once or twice, I spent most of my time studying alone. Well, maybe not alone.

Claude and I spent many nights preparing for interviews. It started out with me just telling Claude, "Help me practice for coding interviews." As you might imagine, that led to LeetCode grinding—which, if you’ve never done LeetCode, is demoralizing in a very specific way. I had never done LeetCode, and when I got to problems where the solution wasn’t immediately evident, I felt like a fool. I eventually got past that, and we made it all the way to dynamic programming, but it felt like a complete waste of time.

It eventually dawned on me that I needed to be just as specific about my prompting for interview practice as I did building things with Claude Code. I changed how I prompted in my practice sessions. “Do not make me grind LeetCode anymore. Let’s focus on concrete concepts: let’s start with an LRU cache.” I mean, my god, I was a software engineer already—I know things, right? So I decided to dig into those things and go through their implementation. LRU cache: maps, mutexes, and a doubly-linked list. Three solid topics in one question.

That’s when interview practice stopped feeling like a grind and started to feel more like… honing my craft. It’s not that I didn’t know how an LRU cache worked, but I hadn’t implemented a linked list in Since-College years, and I had to think for a second about O(1) operations on the cache. I’d actually been asked this question before in an interview, and I think I didn’t get the job just because I said, “When’s the last time you actually implemented one of these, and why did you do it?”

Practicing for interviews stopped being practicing for interviews, and it became practicing for work. Oddly enough, I’m used to this kind of thing. I’m a musician, and while work is rehearsals and performances, that’s not practicing. Practicing happens outside of work. I had side projects early in my career, and college was college. Why have we decided that this kind of behavior is somehow bad? By putting in the work, I was inadvertently making my job easier and more enjoyable. Work felt less like work.

Abstractions that we use on a daily basis obscure much of what we’re actually doing. ORMs. Frameworks. Managed services. Cloud platforms. These have made it so that we can think less about what’s going on and focus on what we’re actually trying to do. But when these things fail, when we actually have to diagnose a critical production incident, it’s obvious that people who don’t understand the underlying mechanics that their work rests upon don't understand it. They have to spend considerable time discovering the basic operational principles that govern it. And now we add our most powerful abstraction yet, which requires the same care as everything before it: AI.

It’s criminally easy to build things using generative AI. The latest models have made it possible to go from idea to implementation in a matter of hours. I’ve now built a number of side projects with Claude Code that I wouldn't have had the time or patience to build before. RAG and query assistant for all of my private documents? Check. Self-hosted journal? Check. Interior design assistant? Double-check. Some people even have the audacity to build products with these things. Now. If only I understood how they worked and how they got there.

I’ll freely admit that for most of the time I’ve been using them, LLMs have been a mystery to me. Training? Don’t know her. Inference? Who’s she? I had heard of a RAG, but I couldn’t tell you what the acronym stood for, or that it didn’t need an article. But over the past few months, inspired by practicing, I’ve done a deep dive into neural networks, language models, transformers, training, and inference. I’m fascinated by the infrastructure that powers AI and the systems that we’ve built around it. The machine is no longer a mystery. And yet, I find myself in awe of the ever-expanding capabilities of models and the rate at which they arrive.

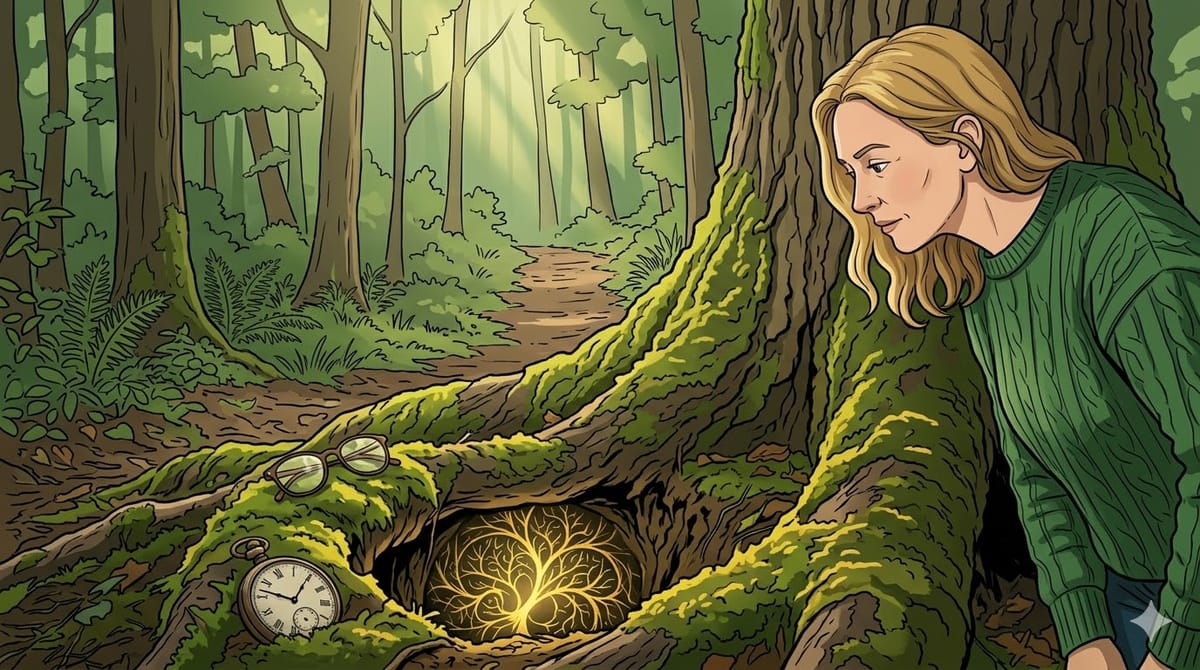

Diving headfirst into systems and software that are unfamiliar to me is something that I do at work a lot, but it’s been a long time since I’ve done it purely for the sake of the game. What started with grinding LeetCode led to learning about transformers and training my first model. Learning for the sake of learning, rather than merely to prepare for interviews, relieved the pressure. It made things fun. And so I find myself staring at the rabbit hole for the first time in a long time, thinking, “Shall I?”